As Malaysian enterprises accelerate their digital transformation, the conversation is moving beyond simple chatbots to autonomous AI agents. They can be used for everything from booking services to managing workflows and accessing business-critical data.

But as autonomy increases, so does the level of risk. For organizations operating in regulated industries such as banking and telecommunications, the concern is not about incorrect outputs. It is about AI agents operating beyond their intended scope, making decisions, triggering actions, or accessing data in ways that were never intended.

Why Do AI Agents Go Rogue?

Traditional software operates on deterministic logic, where predefined rules lead to predictable outcomes. AI agents, however, behave differently. These systems are inherently non-deterministic. The same input can produce different outputs depending on context, prior interactions, and how prompts are interpreted. This flexibility is what makes AI powerful, but it also introduces uncertainty.

Without clearly defined boundaries, AI agents can begin to deviate from their intended behavior. They may generate assumptions that were never explicitly defined, take shortcuts that bypass established workflows, or respond in ways that are inconsistent with business logic. In multi-step processes, even a small deviation at the start can cascade into larger issues, making outcomes harder to predict and control.

Why Secure AI Agent Development Matters

When an AI agent behaves unpredictably, the impact goes beyond technical performance. It has become a business concern.

In Malaysia, where regulatory expectations around data privacy and governance continue to tighten, insecure AI systems can expose organizations to significant risk. An agent that accesses the wrong data, executes unintended actions, or produces unreliable outputs can lead to operational disruption and erode customer trust.

This is why security must be treated as a core part of AI development. It is what allows organizations to move beyond experimentation and deploy AI systems that are reliable, accountable, and ready for real-world use.

Also Read: The Truth About AI and Sustainability: How Inference Is the Real Energy Hog

How to Build Secure AI Agents with Control, Safety, and Visibility

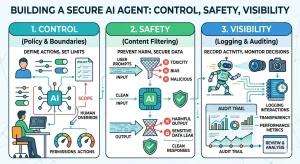

Building secure AI agents comes down to three core principles:

- Control to define what the agent is allowed to do

- Safety to ensure it behaves within acceptable boundaries

- Visibility to monitor and understand its actions

These elements work together, because without one, the system becomes harder to manage and trust.

Control Starts with Boundaries

AI agents should be treated like any other system user, with clearly defined access and limitations.

Define Roles and Permissions

Apply role-based access control (RBAC) to ensure agents only access what they need. For example, a customer service agent should not have the ability to modify financial or pricing data.

Limit Tool Access and High-Risk Actions

Adopt a least-privilege approach. Restrict access to tools that can perform sensitive actions, such as writing databases or accessing personally identifiable information (PII).

Guardrails Are Foundational

Safety mechanisms should be embedded directly into the system design. Industry best practices emphasize the importance of proactive guardrails to ensure AI systems operate within defined boundaries.

Policy Constraints and Fail-Safes

Define clear boundaries for what the agent can and cannot do. If an output violates a policy, the system should trigger a fail-safe, stopping execution before any action is taken.

Human-in-the-Loop for Critical Decisions

For high-impact scenarios, human approval remains essential. Whether it is approving transactions or modifying system configurations, critical decisions should not be fully automated.

Visibility Is the Missing Layer

Control and safety are not enough if you cannot see how the agent operates.

Trace Prompts, Decisions, and Tool Usage

Maintain a clear audit trail of inputs, outputs, and actions. This helps teams understand how decisions are made and ensures accountability.

Detect Failures Across Multi-Step Flows

AI agents often execute tasks across multiple steps. Visibility allows teams to identify where issues occur and resolve them before they escalate.

Also Read: The Future of Big Data: How It Will Transform Business in 2026

From Black Box to Controlled AI Agent Applications with Couchbase

To support secure AI operations, businesses need a data platform that enables both performance and observability. Couchbase provides this foundation through solutions like Couchbase Capella and its AI services.

Instead of treating AI as a “black box,” organizations can gain better control and insight into how agents operate.

End-to-End Agent Tracing: Capture and analyze agent interactions in real time, including memory, prompts, and decisions. This creates a clear audit trail for every action taken.

Governance and Debugging at Scale: With flexible data models and built-in capabilities like vector search, teams can update knowledge, refine policies, and troubleshoot issues without disrupting operations.

Build and Scale Secure AI Agents with CTM & Couchbase

Building autonomous AI systems does not have to come at the cost of control. Computrade Technology Malaysia (CTM), part of CTI Group, in partnership with Couchbase, helps organizations design and implement AI agents that are secure, scalable, and aligned with business requirements.

From architecture design to deployment and governance, CTM supports Malaysian enterprises in building AI systems that deliver value without introducing unnecessary risk.

Bring control and visibility to your AI initiatives. Talk to our team to learn more about secure, scalable AI agent architectures tailored to your business.

Author: Wilsa Azmalia Putri – Content Writer CTI Group